Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

The pre-requisites for deploying on Azure

The Azure Kubernetes Service (AKS) is one of the Azure services for deploying, managing and scaling any distributed and containerized workloads, here we can provision the AKS cluster on Azure from the ground up using terraform (infra-as-code) and then deploy the DIGIT platform services as config-as-code using Helm.

Know about AKS: https://www.youtube.com/watch?v=i5aALhhXDwc&ab_channel=DevOpsCoach

Know what is terraform: https://youtu.be/h970ZBgKINg

Azure subscription: If you don't have an Azure subscription, create a free account before you begin.

Install Azure CLI

Configure Terraform: Follow the directions in the article, Terraform and configure access to Azure

Azure service principal: Follow the directions in the Create the service principal section in the article, Create an Azure service principal with Azure CLI. Take note of the values for the appId, displayName, password, and tenant.

Install kubectl on your local machine which helps you interact with the Kubernetes cluster.

Install Helm that helps you package the services along with the configurations, environments, secrets, etc into Kubernetes manifests.

Find the pre-requisites for deploying DIGIT platform services on AWS

AWS account with admin access to provision infrastructure. You will need a paid subscription to the AWS.

Install kubectl (any version) on the local machine - it helps interact with the Kubernetes cluster.

Install Helm - this helps package the services, configurations, environments, secrets, etc into Kubernetes manifests. Verify that the installed version of helm is equal to 3.0 or higher.

Refer to tfswitch documentation for different platforms. Terraform version 0.14.10 can be installed directly as well.

5. Run tfswitch and it will show a list of terraform versions. Scroll down and select terraform version (0.14.10) for the Infra-as-code (IaC) to provision cloud resources as code. This provides the desired resource graph and helps destroy the cluster in one go.

Install Golang

For Linux: Follow the instructions here to install Golang on Linux.

For Windows: Download the installer using the link here and follow the installation instructions.

For Mac: Download the installer using the link here and follow the installation instructions.

Install cURL - for making API calls

Install Visual Studio Code - for better code visualization/editing capabilities

Install Postman - to run digit bootstrap scripts

Install AWS CLI

If you have any questions please write to us.

Make sure to use the appropriate discussion category and labels to address the issues better.

Provision infra for DIGIT on AWS using Terraform

Amazon Elastic Kubernetes Service (EKS) is an AWS service for deploying, managing, and scaling distributed and containerized workloads. With EKS, you can easily provision a cluster on AWS using Terraform, which automates the process. Then, deploy the DIGIT services configuration using Helm.

Know about EKS: https://www.youtube.com/watch?v=SsUnPWp5ilc

Know what is terraform: https://youtu.be/h970ZBgKINg

Setup infrastructure required for deploying DIGIT

DIGIT can be deployed on a public cloud like AWS, Azure or a private cloud.

Learn the basics of Kubernetes: https://www.youtube.com/watch?v=PH-2FfFD2PU&t=3s

Learn the basics of kubectl commands

Note: To deploy DIGIT using Github Actions, refer to the document - DIGIT Deployment Using GithubActions. With this installation approach, there's no need to manually create the infrastructure as GitHub Actions will automatically handle the creation and deployment of DIGIT.

Choose your cloud and follow the instructions to set up a Kubernetes cluster before deploying.

The image below illustrates the multiple components deployed. These include the EKS, Worker Nodes, Postgres DB, EBS Volumes, and Load Balancer.\

Clone the DIGIT-DevOps repository:

Navigate to the cloned repository and checkout the release-1.28-Kubernetes branch:

Check if the correct credentials are configured using the command below. Refer to the attached doc to setup AWS Account on the local machine.

Make sure that the above command reflects the set AWS credentials. Proceed once the details are confirmed. (If the credentials are not set follow Step 2 Setup AWS account )

Choose either method below to generate SSH key pairs

a. Use an online website (not recommended in a production setup. To be only used for demo setups): https://8gwifi.org/sshfunctions.jsp

b. Use openssl:

Add the public key to your GitHub account.

Open input.yaml file in vscode. Use the below code to open it in VS code:

code infra-as-code/terraform/sample-aws/input.yaml

If the command does not work, open the file in VS code manually. Once the file is open, fill in the inputs. (If you are not using vscode, open it in any editor of your choice).

Fill in the inputs as per the regex mentioned in the comments.

Go to infra-as-code/terraform/sample-aws and run init.go script to enrich different files based on input.yaml.

Once we are complete declaring the resources, begin with deploying all resources.

Run the terraform scripts to provision infra required to Deploy DIGIT on AWS.

CD (change directory) to the following directory and run the below commands to create the remote state.

Once the remote state is created, it is time to provision DIGIT infra. Run the below commands:

Important:

DB password is asked for in the application stage. Remember the password you have provided. It should be at least 8 characters long. Otherwise, RDS provisioning will fail.

The output of the apply command will be displayed on the console. Store this in a file somewhere. Values from this file will be used in the next step of deployment.

2. Use this link to get the kubeconfig from EKS for the cluster. The region code is the default region provided in the availability zones in variables.tf. For example - ap-south-1. EKS cluster name also should've been filled in variables.tf.

3. Verify that you can connect to the cluster by running the following command

At this point, your basic infra has been provisioned.

Note: Refer to the DIGIT deployment documentation to deploy DIGIT services.

To destroy the previously created infrastructure with Terraform, run the command below:

ELB is not deployed via Terraform. ELB was created at deployment time by the setup of Kubernetes Ingress. This has to be deleted manually by deleting the ingress service.

kubectl delete deployment nginx-ingress-controller -n <namespace>

kubectl delete svc nginx-ingress-controller -n <namespace>

Note: Namespace can be either egov or jenkins.

Delete S3 buckets manually from the AWS console and verify if ELB got deleted.

In case of if ELB is not deleted, you need to delete ELB from the AWS console.

Run terraform destroy.

Sometimes all artefacts associated with a deployment cannot be deleted through Terraform. For example, RDS instances might have to be deleted manually. It is recommended to log in to the AWS management console and look through the infra to delete any remnants.

Steps to setup the AWS account for deployment

Annexures:

s

Provision infra for DIGIT on Azure using Terraform

manages your hosted Kubernetes environment. AKS allows you to deploy and manage containerized applications without container orchestration expertise. AKS also enables you to do many common maintenance operations without taking your app offline. These operations include provisioning, upgrading, and scaling resources on demand.

Deployment on SDC

Running Kubernetes on-premise gives a cloud-native experience on SDC when it comes to deploying DIGIT.

Whether States have their own on-premise data centre or have decided to forego the various managed cloud solutions, there are a few things one should know when getting started with on-premise K8s.

One should be familiar with Kubernetes and the consists of the Kube-apiserver, Kube-scheduler, Kube-controller-manager and an ETCD datastore. For managed cloud solutions like or , it also includes the cloud-controller-manager. This is the component that connects the cluster to external cloud services to provide networking, storage, authentication, and other support features.

To successfully deploy a bespoke Kubernetes cluster and achieve a cloud-like experience on SDC, one needs to replicate all the same features you get with a managed solution. At a high level, this means that we probably want to:

Automate the deployment process

Choose a networking solution

Choose a right storage solution

Handle security and authentication

The subsequent sections look at each of these challenges individually, and provide enough of a context required to help in getting started.

Using a tool like Ansible can make deploying Kubernetes clusters on-premise trivial.

When deciding to manage your own Kubernetes clusters, we need to set up a few proofs-of-concept (PoC) clusters to learn how everything works, perform performance and conformance tests, and try out different configuration options.

After this phase, automating the deployment process is an important if not necessary step to ensure consistency across any clusters you build. For this, you have a few options, but the most popular are:

If you already using Ansible, Kubespray is a great option, otherwise, we recommend writing automation around Kubeadm using your preferred playbook tool after using it a few times. This will also increase your confidence and knowledge of Kubernetes.

: a low-level tool that helps you bootstrap a minimum viable Kubernetes cluster that conforms to best practices

: an Ansible playbook that helps deploy production-ready clusters

Pre-requisites for deployment on SDC

Check the hardware and software pre-requisites for deployment on SDC.

Kubernetes nodes

Ubuntu 18.04

SSH

Privileged user

Python

Bastion machine

Ansible

Git

Python

Since there are many DIGIT services and the development code is part of various git repos, one needs to understand the concept of cicd-as-service which is open-sourced. This page guides you through the process of creating a CI/CD pipeline.

The initial steps for integrating any new service/app to the CI/CD are discussed below.

Once the desired service is ready for integration: decide the service name, type of service, and if DB migration is required or not. While you commit the source code of the service to the git repository, the following file should be added with the relevant details which are mentioned below:

Build-config.yml – It is present under the build directory in the repository

This file contains the below details used for creating the automated Jenkins pipeline job for the newly created service.

While integrating a new service/app, the above content needs to be added to the build-config.yml file of that app repository. For example: to onboard a new service called egov-test, the build-config.yml should be added as mentioned below.

If a job requires multiple images to be created (DB Migration) then it should be added as below,

Note - If a new repository is created then the build-config.yml is created under the build folder and the config values are added to it.

The git repository URL is then added to the Job Builder parameters

When the Jenkins Job => job builder is executed, the CI Pipeline gets created automatically based on the above details in build-config.yml. Eg: egov-test job is created in the builds/DIGIT-OSS/core-services folder in Jenkins since the “build-config is edited under core-services” And it should be the “master” branch. Once the pipeline job is created, it can be executed for any feature branch with build parameters - specifying the branch to be built (master or feature branch).

As a result of the pipeline execution, the respective app/service docker image is built and pushed to the Docker repository.

On repo provide read-only access to GitHub users (created while ci/cd deployment)

The Jenkins CI pipeline is configured and managed 'as code'.

If the git repository ssh URL is available, build the Job-Builder Job.

If the git repository URL is not available, check and add the same team.

The services are deployed and managed on a Kubernetes cluster in cloud platforms like AWS, Azure, GCP, OpenStack, etc. Here, we use helm charts to manage and generate the Kubernetes manifest files and use them for further deployment to the respective Kubernetes cluster. Each service is created as charts which have the below-mentioned files.

Note: The steps below are only for the introduction and implementation of new services.

If you are going to introduce a new module with the help of multiple services, we suggest you create a new Directory with your module name.

Example.:-

This chart can also be modified further based on user requirements.

The deployment of manifests to the Kubernetes cluster is made very simple and easy. There are Jenkins Jobs for each state and are environment-specific. We need to provide the image name or the service name for the respective Jenkins deployment job.

The deployment Jenkins job internally performs the following operations:

Reads the image name or the service name given and finds the chart that is specific to it.

Generates the Kubernetes manifests files from the chart using the helm template engine.

Execute the deployment manifest with the specified docker image(s) to the Kubernetes cluster.

To deploy the solution to the cloud there are several ways that we can choose. In this case, we will use terraform Infra-as-code.

Terraform is an open-source infrastructure as code () software tool that allows DevOps engineers to programmatically provision the physical resources an application requires to run.

Infrastructure as code is an IT practice that manages an application's underlying IT infrastructure through programming. This approach to resource allocation allows developers to logically manage, monitor and provision resources -- as opposed to requiring that an operations team manually configure each required resource.

Terraform users define and by using a JSON-like configuration language called HCL (HashiCorp Configuration Language). HCL's simple syntax makes it easy for DevOps teams to provision and re-provision infrastructure across multiple clouds and on-premises data centres.

Before we provision the cloud resources, we need to understand and be sure about what resources need to be provisioned by Terraform to deploy DIGIT. The following picture shows the various key components. (AKS, Node Pools, Postgres DB, Volumes, Load Balancer)

Here we have already written the terraform script that one can reuse/leverage that provisions the production-grade DIGIT Infra and can be customized with the user-specific configuration.

Save the file and exit the editor.

Once you have finished declaring the resources, you can deploy all resources.

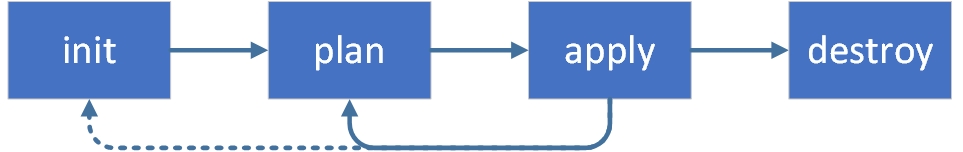

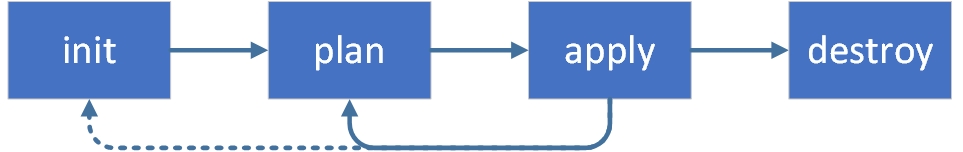

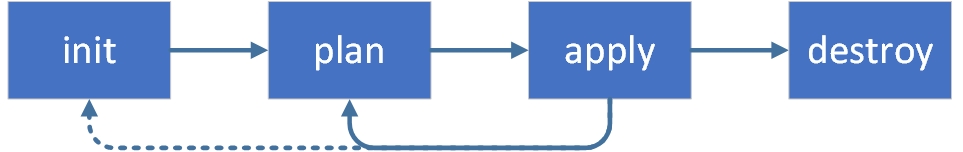

terraform init: command is used to initialize a working directory containing Terraform configuration files.

terraform plan: command creates an execution plan, which lets you preview the changes that Terraform plans to make to your infrastructure.

terraform apply: command executes the actions proposed in a Terraform plan to create or update infrastructure.

After the complete creation, you can see resources in your Azure account.

Now we know what the terraform script does, the resources graph that it provisions and what custom values should be given with respect to your environment. The next step is to begin to run the terraform scripts to provision infra required to Deploy DIGIT on Azure.

Use the CD command to move into the following directory run the following commands 1-by-1 and watch the output closely.

The Kubernetes tools can be used to verify the newly created cluster.

Once Terraform Apply execution is complete, it generates the Kubernetes configuration file or you can get it from Terraform state.

Use the below command to get kubeconfig. It will automatically store your kubeconfig in .kube folder.

3. Verify the health of the cluster.

Steps to setup CI/CD on SDC

Kubespray is a composition of playbooks, , provisioning tools, and domain knowledge for generic OS/Kubernetes cluster configuration management tasks. Kubespray provides:

a highly available cluster

composable attributes

support for most popular Linux distributions

continuous-integration tests

the repos below to your GitHub Organization account

(version 1.13.X)

Install on your local machine to interact with the Kubernetes cluster.

Install to help package the services along with the configurations, environment, secrets, etc into .

One Bastion machine to run Kubespray

HA-PROXY machine which acts as a load balancer with Public IP. (CPU: 2Core , Memory: 4Gb)

one machine which acts as a master node. (CPU: 2Core , Memory: 4Gb)

one machine which acts as a worker node. (CPU: 8Core , Memory: 16Gb)

ISCSI volumes for persistence volume. (number of quantity: 2 )

kaniko-cache-claim:- 10Gb

Jenkins home:- 100Gb

Kubernetes nodes

Ubuntu 18.04

SSH

Privileged user

Python

Bastion machine

Ansible

git

Python

Run and follow instructions on all nodes.

Ansible needs Python to be installed on all the machines.

apt-get update && apt-get install python3-pip -y

All the machines should be in the same network with ubuntu or centos installed.

ssh key should be generated from the Bastion machine and must be copied to all the servers part of your inventory.

Generate the ssh key ssh-keygen -t rsa

Copy over the public key to all nodes.

Clone the official repository

Install dependencies from requirements.txt

Create Inventory

where mycluster is the custom configuration name. Replace with whatever name you would like to assign to the current cluster.

Create inventory using an inventory generator.

Once it runs, you can see an inventory file that looks like the below:

Review and change parameters under inventory/mycluster/group_vars

Deploy Kubespray with Ansible Playbook - run the playbook as Ubuntu

The option --become is required, for example writing SSL keys in /etc/, installing packages and interacting with various system daemons.

Note: Without --become - the playbook will fail to run!

Kubernetes cluster will be created with three masters and four nodes with the above process.

Kube config will be generated in a .Kubefolder. The cluster can be accessible via kubeconfig.

Install haproxy package in a haproxy machine that will be allocated for proxy

sudo apt-get install haproxy -y

IPs need to be whitelisted as per the requirements in the config.

sudo vim /etc/haproxy/haproxy.cfg

Iscsi volumes will be provided by the SDC team as per the requisition and the same can be used for statefulsets.

Job Builder – Job Builder is a Generic Jenkins job which creates the Jenkins pipeline automatically which is then used to build the application, create the docker image of it and push the image to the Docker repository. The Job Builder job requires the git repository URL as a parameter. It clones the respective git repository and reads the file for each git repository and uses it to create the service build job.

Check and add your repo ssh URL in

To deploy a new service, you need to create a new helm chart for it( refer to the above example). The chart should be created under the charts/helm directory in the repository.

You can refer to the existing helm chart structure

Ideally, one would write the terraform script from scratch using this .

Clone the following where we have all the sample terraform scripts available for you to leverage.

2. Change the according to your requirements.

3. Declare the variables in

4. Create a Terraform output file () and paste the following code into the file.

The details of the worker nodes should reflect the status as Ready for All. Note: Please refer to thedocumentation to deploy DIGIT services.

Refer to the .

Deploy DIGIT using Kubespray

Kubespray is a composition of Ansible playbooks, inventory, provisioning tools, and domain knowledge for generic OS/Kubernetes cluster configuration management tasks. Kubespray provides:

a highly available cluster

composable attributes

support for most popular Linux distributions

continuous-integration tests

Before we can get started, we need a few prerequisites to be in place. This is what we are going to need:

A host with Ansible installed. Click here to learn more about Ansible. Find the Ansible installation details here.

You should also set up an SSH key pair to authenticate to the Kubernetes nodes without using a password. This permits Ansible to perform optimally.

Few servers/hosts/VMs to serve as our targets to deploy Kubernetes. I am using Ubuntu 18.04, and my servers each have 4GB RAM and 2vCPUs. This is fine for my testing purposes, which I use to try out new things using Kubernetes. You need to be able to SSH into each of these nodes as root using the SSH key pair I mentioned above.

The above will do the following:

Create a new Linux User Account for use with Kubernetes on each node

Install Kubernetes and containers on each node

Configure the Master node

Join the Worker nodes to the new cluster

Ansible needs Python to be installed on all the machines.

apt-get update && apt-get install python3-pip -y

All the machines should be in the same network with Ubuntu or Centos installed.

ssh key should be generated from the Bastion machine and must be copied to all the servers part of your inventory.

Generate the ssh key ssh-keygen -t rsa

Copy over the public key to all nodes.

Clone the official repository

Install dependencies from requirements.txt

Create Inventory

where mycluster is the custom configuration name. Replace with whatever name you would like to assign to the current cluster.

Create inventory using an inventory generator.

Once it runs, you can see an inventory file that looks like the below:

Review and change parameters under inventory/mycluster/group_vars

Deploy Kubespray with Ansible Playbook - run the playbook as Ubuntu

The option --become is required for example writing SSL keys in /etc/, installing packages and interacting with various system daemons.

Note: Without --become - the playbook will fail to run!

Kubernetes cluster will be created with three masters and four nodes using the above process.

Kube config will be generated in a .Kubefolder. The cluster can be accessible via kubeconfig.

Install haproxy package in a haproxy machine that will be allocated for proxy

sudo apt-get install haproxy -y

IPs need to be whitelisted as per the requirements in the config.

sudo vim /etc/haproxy/haproxy.cfg

Iscsi volumes will be provided by the SDC team as per the requisition and the same can be used for statefulsets.

Note: Please refer to the DIGIT deployment documentation to deploy DIGIT services.

This tutorial will walk you through on how to setup CI/CD

Terraform helps you build a graph of all your resources and parallelizes the creation or modification of any non-dependent resources. Thus, Terraform builds infrastructure as efficiently as possible while providing the operators with clear insight into the dependencies on the infrastructure.

Fork the repos below to your GitHub Organization account

Go lang (version 1.13.X)

AWS account with admin access to provision EKS Service. Try subscribing to a free AWS account to learn the basics. There is a limit on what is offered as free. This demo requires a commercial subscription to the EKS service. The cost for a one or two days trial might range between Rs 500-1000. (Note: Post the demo, for the internal folks, eGov will provide a 2-3 hrs time-bound access to eGov's AWS account based on the request and the available number of slots per day).

Install kubectl on your local machine to interact with the Kubernetes cluster.

Install Helm to help package the services along with the configurations, environment, secrets, etc into a Kubernetes manifests.

Install terraform version (0.14.10) for the Infra-as-code (IaC) to provision cloud resources as code and with desired resource graph. It also helps destroy the cluster in one go.

Install AWS CLI on your local machine so that you can use AWS CLI commands to provision and manage the cloud resources on your account.

Install AWS IAM Authenticator to help authenticate your connection from your local machine and deploy DIGIT services.

Use the AWS IAM User credentials provided for the Terraform (Infra-as-code) to connect to the AWS account and provision the cloud resources.

You will receive a Secret Access Key and Access Key ID. Save the keys.

Open the terminal and run the command given below. The AWS CLI is already installed and the credentials are saved. (Provide the credentials, leave the region and output format blank).

The above creates the following file on your machine as /Users/.aws/credentials.

Before we provision the cloud resources, we need to understand and be sure about what resources need to be provisioned by terraform to deploy CI/CD.

The following is the resource graph that we are going to provision using terraform in a standard way so that every time and for every environment, the infra is the same.

EKS Control Plane (Kubernetes master)

Work node group (VMs with the estimated number of vCPUs, Memory)

EBS Volumes (Persistent volumes)

VPCs (Private networks)

Users to access, deploy and read-only

Ideally, one would write the terraform script from scratch using this doc.

Here we have already written the terraform script that provisions the production-grade DIGIT Infra and can be customized with the specified configuration.

Clone the DIGIT-DevOps GitHub repo. The terraform script to provision the EKS cluster is available in this repo. The structure of the files is given below.

Here, you will find the main.tf under each of the modules that have the provisioning definition for resources like EKS cluster, storage, etc. All these are modularized and react as per the customized options provided.

Example:

VPC Resources -

VPC

Subnets

Internet Gateway

Route Table

EKS Cluster Resources -

IAM Role to allow EKS service to manage other AWS services

EC2 Security Group to allow networking traffic with the EKS cluster

EKS Cluster

EKS Worker Nodes Resources -

IAM role allowing Kubernetes actions to access other AWS services

EC2 Security Group to allow networking traffic

Data source to fetch the latest EKS worker AMI

AutoScaling launch configuration to configure worker instances

AutoScaling group to launch worker instances

Storage Module -

Configuration in this directory creates EBS volume and attaches it together.

The following main.tf with create s3 bucket to store all the states of the execution to keep track.

The following main.tf contains the detailed resource definitions that need to be provisioned.

Dir: DIGIT-DevOps/Infra-as-code/terraform/egov-cicd

Define your configurations in variables.tf and provide the environment-specific cloud requirements. The same terraform template can be used to customize the configurations.

Following are the values that you need to mention in the following files. The blank ones will prompt for inputs during execution.

We have covered what the terraform script does, the resources graph that it provisions and what custom values should be given with respect to the selected environment.

Now, run the terraform scripts to provision the infra required to Deploy DIGIT on AWS.

Use the 'cd' command to change to the following directory and run the following commands. Check the output.

After successful execution, the following resources get created and can be verified by the command "terraform output".

s3 bucket: to store terraform state

Network: VPC, security groups

IAM users auth: using keybase to create admin, deployer, the user

Use the URL https://keybase.io/ to create your own PGP key. This creates both public and private keys on the machine, upload the public key into the keybase account that you have just created, give a name to it and ensure that you mention that in your terraform. This allows you to encrypt sensitive information.

Example: Create a user keybase. This is "egovterraform" in the case of eGov. Upload the public key here - https://keybase.io/egovterraform/pgp_keys.asc

Use this portal to Decrypt the secret key. To decrypt the PGP message, upload the PGP Message, PGP Private Key and Passphrase.

EKS cluster: with master(s) & worker node(s).

Storage(s): for es-master, es-data-v1, es-master-infra, es-data-infra-v1, zookeeper, kafka, kafka-infra.

Use this link to get the kubeconfig from EKS to fetch the kubeconfig file. This enables you to connect to the cluster from your local machine and deploy DIGIT services to the cluster.

Finally, verify that you are able to connect to the cluster by running the command below:

Whola! All set and now you can Deploy Jenkins

Post infra setup (Kubernetes Cluster), we start with deploying the Jenkins and kaniko-cache-warmer.

Sub domain to expose CI/CD URL

GitHub Oauth App (this provides you with the clientId, clientSecret)

Under Authorization callback URL enter the below url ie (Replace <domain_name> with your domain) https://<domain_name>/securityRealm/finishLogin

Generate a new ssh key for the above user (this provides the ssh public and private keys)

Add the earlier created ssh public key to GitHub user account

Add ssh private key to the gitReadSshPrivateKey

With previously created GitHub users generate a personal read-only access token

Docker hub account details (username and password)

SSL certificate for the sub-domain

Prepare an <ci.yaml> master config file and <ci-secrets.yaml>. Name this file as desired. It has the following configurations:

credentials, secrets (you need to encrypt using sops and create a ci-secret.yaml separately)

Add subdomain name in ci.yaml

Check and add your project specific ci-secrets.yaml details (like github Oauth app clientId, clientSecret, gitReadSshPrivateKey, gitReadAccessToken, dockerConfigJson, dockerUsername and dockerPassword)

To create a Jenkins namespace mark this flag true

Add your environment-specific kubconfigs under kubConfigs like https://github.com/egovernments/DIGIT-DevOps/blob/release/config-as-code/environments/ci-demo-secrets.yaml#L50

KubeConfig environment name and deploymentJobs name from ci.yaml should be the same

Update the CIOps and DIGIT-DevOps repo ssh url with the forked repo's ssh url.

Make sure earlier created github users have read-only access to the forked DIGIT-DevOps and CIOps repos.

SSL certificate for the sub-domain.

Update the DOCKER_NAMESPACE with your docker hub organization name.

Update the repo name "egovio" with your docker hub organization name in buildPipeline.groovy

Remove the below env:

Jenkins is launched. You can access the same through your sub-domain configured in ci.yaml.